How to Identify Bias in Materiality Assessments

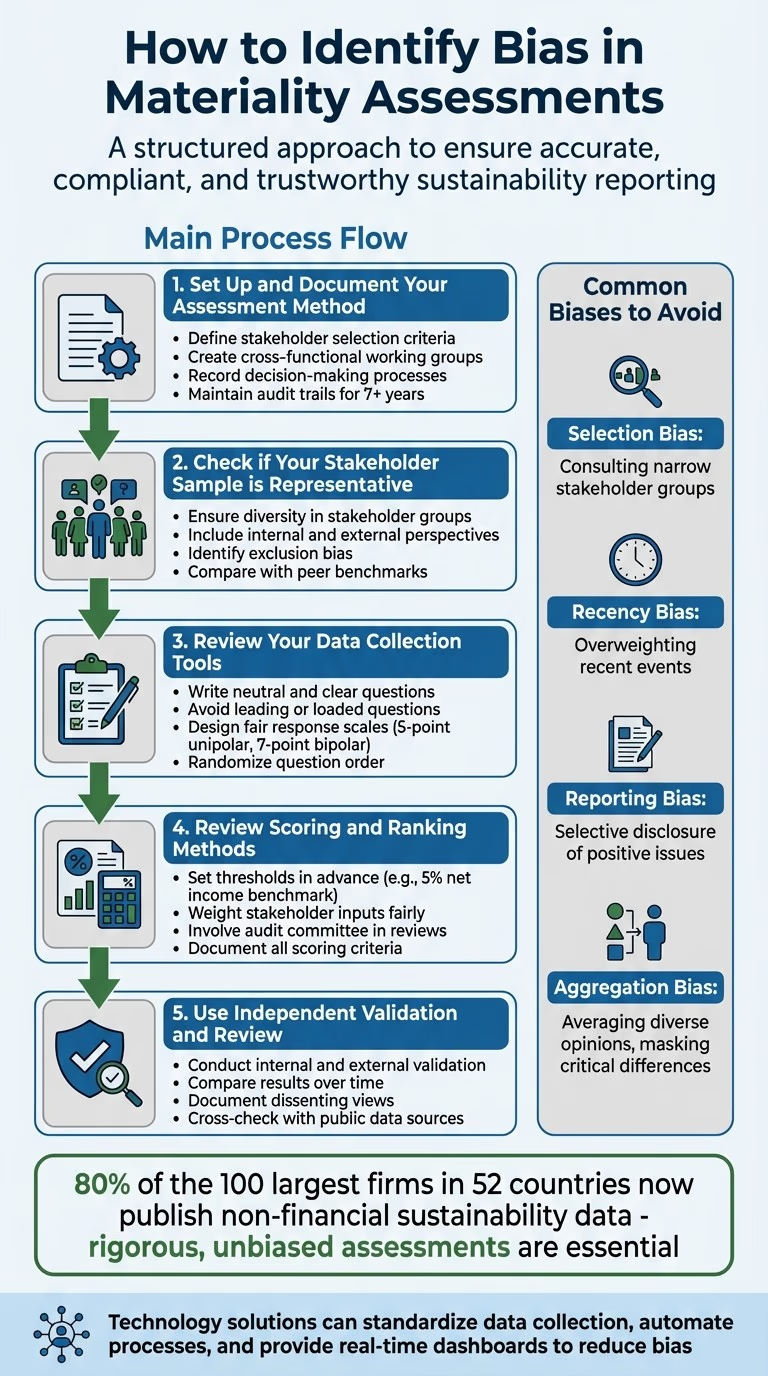

Materiality assessments help organisations determine which sustainability issues matter most to their business and stakeholders. But bias can undermine their accuracy, damage trust, and lead to compliance risks. Common biases include:

- Selection Bias: Involves consulting a narrow group of stakeholders, leading to skewed results.

- Recency/Reporting Bias: Prioritises recent events or selectively highlights positive issues.

- Aggregation Bias: Averages diverse opinions, masking critical differences.

To avoid these pitfalls:

- Document Your Process: Establish clear methods, stakeholder criteria, and audit trails.

- Ensure Representativeness: Include diverse stakeholders and address exclusion risks.

- Refine Data Collection: Use neutral, clear questions and balanced response scales.

- Review Scoring Methods: Set thresholds in advance and avoid subjective rankings.

- Use Independent Validation: Engage reviewers for unbiased oversight.

Technology can also reduce bias by standardising data collection and automating processes, and providing real-time dashboards. These steps ensure your assessments are accurate, compliant, and trustworthy.

5-Step Process to Identify and Eliminate Bias in Materiality Assessments

Where Bias Enters Materiality Assessments

Spotting bias in materiality assessments is crucial for ensuring accuracy and fairness. Paul Munter, Acting Chief Accountant at the SEC, highlights the importance of objectivity:

An assessment where a registrant's, auditor's, or audit committee's biases... influenced a determination that an error is not material... would not be objective and would be inconsistent with the concept of materiality.

There are three main types of bias that can distort your reporting, each with unique challenges and consequences.

Selection Bias

Selection bias happens when the group of stakeholders you consult doesn’t reflect the broader population. Relying on familiar contacts who already share your perspective can skew results toward your existing agenda. Deloitte warns against this:

Neglecting adequate stakeholder inclusion - not engaging a broad spectrum of internal and external stakeholders, or focusing on an inadequate, imbalanced set of stakeholders - can lead to an incomplete understanding of material issues.

This oversight can leave out critical voices, especially those from marginalised groups or emerging sectors, creating gaps in compliance with frameworks like UK SRS or ISSB.

Recency and Reporting Bias

Recency bias gives undue weight to recent events, while reporting bias stems from selective disclosure, both of which can distort your materiality matrix. The Financial Reporting Council observes:

Companies rarely have a formal process or guidelines for determining what is qualitatively material financial information. Instead, companies typically have an informal 'gut feeling' understanding of 'what's important'.

This lack of structure often results in recent, high-profile incidents dominating the assessment, while long-term, systemic issues go unnoticed. As one investor told the FRC:

What's material today might change in 12- or 24-months' time. You can't just work off your old materiality assessment, it needs to be current.

Aggregation Bias

Aggregation bias arises when diverse opinions are averaged into a single score, masking critical differences. This approach can obscure outlier views and high-impact issues. For instance, a sustainability concern might appear moderately important when, in reality, half of your stakeholders consider it crucial, while the other half dismiss it entirely. Such polarisation signals a material risk that averaging fails to capture. With 80% of the 100 largest firms in 52 countries now publishing non-financial sustainability data, the need for rigorous, unbiased assessments has never been greater. Yet, aggregation bias remains a common pitfall.

Recognising these biases is the first step toward building more robust and reliable assessment processes. Following a materiality documentation review can help identify these gaps before they impact reporting.

Step 1: Set Up and Document Your Assessment Method

Having a clear and verifiable process is crucial to avoid bias. Without proper documentation, materiality assessments can easily become informal and inconsistent, relying too much on subjective judgement. This not only risks incomplete reporting but also invites regulatory scrutiny, especially under frameworks like ISSB reporting.

Define Stakeholder Selection Criteria

Start by creating a cross-functional materiality working group. Include team members from finance, operations, sustainability, HR, commercial, and procurement departments. This approach ensures diverse perspectives and avoids the pitfalls of working in silos.

"It all starts with good governance. The board needs to have a grip on what's material to stakeholders".

To capture material issues effectively, align the board’s strategy with operational insights. Use both top-down and bottom-up approaches to avoid overlooking important issues that might not be visible to a single department. Document every decision, specifying how stakeholders were chosen, the groups they represent, and their relevance to your value chain. A non-executive director highlighted:

"Materiality is not just a mechanical thing that you add up for misstatements".

Once these decisions are made, formalise them into a detailed audit trail.

Record Decision-Making Processes

Develop an audit trail that explains how material topics and thresholds were identified. This documentation should include:

- Stakeholder identification and engagement planning (such as contact databases, relationship maps, materiality justifications, methodologies, timelines, and framework alignments).

- Data validation steps (like cross-checks, resolving discrepancies, and approval records).

- Change management records (including version logs, modification details, and approvals).

Keep this evidence for at least seven years to meet regulatory requirements. A robust audit trail not only satisfies compliance standards but also strengthens stakeholder trust in your process, forming a solid base for the following steps in your assessment.

Step 2: Check if Your Stakeholder Sample is Representative

Make sure your stakeholder sample captures all relevant perspectives. If your group is too narrow, you risk selection bias, which can lead to materiality assessments that miss key risks or opportunities.

Diversity in Stakeholder Groups

Including a wide range of stakeholders helps minimise the risk of overlooking important issues that might escape a single department or viewpoint. The Financial Reporting Council highlights this point:

"Joining-up viewpoints and bringing together diverse teams across the organisation helps with connecting issues. It also serves as a completeness check, reducing the risk of missing something crucial".

Your internal team should bring cross-functional expertise to the table, while external stakeholders should represent a mix of geographies, sectors, and roles - such as suppliers, customers, regulators, investors, employees, and community groups.

Combine top-down reviews, which align with corporate strategy, with bottom-up approaches like employee interviews or investor feedback to uncover less obvious insights.

As one non-executive director observed:

"If the market has a different view than management, it's important to ask – do they have a point? Is it something we haven't seen or that management maybe doesn't want to see?".

Peer benchmarking can also help validate your process. Compare your stakeholder engagement and material topics with those of similar organisations. For instance, if your peers are engaging with groups you've excluded, it may highlight a gap in your assessment. This is especially crucial under frameworks like ISSB reporting and materiality, where investor-focused materiality depends on comprehensive stakeholder mapping.

A well-rounded stakeholder sample ensures your materiality outcomes are both reliable and audit-ready.

Identifying Exclusion Bias

Once you've ensured diversity, check that no critical groups have been left out. Exclusion bias occurs when certain perspectives are systematically omitted, whether intentionally or not. To spot this, conduct a stakeholder mapping exercise to visualise any gaps. If you've excluded groups, document your reasons in the audit trail.

A simple comparison table can help track which stakeholder categories you've included versus those typically engaged by your industry peers. For example, if you've deprioritised certain suppliers because they account for a small portion of your spend, consider whether their environmental or social impact might still make them material.

Cross-functional working groups are invaluable for avoiding exclusion bias. Team members from different departments can offer practical insights that senior management might miss. Keeping detailed attendance logs and meeting notes can support this validation process internally.

Finally, compare stakeholder input with public data sources. If your findings show low concern about an issue but external reports, media coverage, or regulatory trends suggest otherwise, investigate the discrepancy. Document how you address these differences to strengthen your audit trail and ensure your assessment remains fair and unbiased.

Step 3: Review Your Data Collection Tools

Once you've ensured a representative sample of stakeholders, it's time to take a closer look at your data collection methods. Poorly crafted surveys or interviews can skew responses, leading to inaccurate results. Solid data collection practices are essential for producing reliable, audit-ready materiality assessments and ensuring compliance with regulatory standards.

Writing Neutral and Clear Questions

The way you phrase your questions can make or break the quality of your data. To gather unbiased insights:

- Avoid leading questions. These suggest a preferred answer. For instance, instead of asking, "Don't you agree our tool is easy to use?" try: "How would you rate the ease of use of our tool?".

- Stay clear of loaded questions. These embed assumptions. Asking, "What do you like most about our excellent service?" presumes the respondent already thinks your service is excellent. A better alternative would be: "How would you rate our customer service?".

- Split double-barrelled questions. Questions combining multiple issues, like "How satisfied are you with our pricing and support?" should be broken into separate queries.

- Avoid double negatives. Phrases like "Was the facility not unclean?" can confuse respondents and lead to inaccurate answers. Simplify it to: "How would you rate the cleanliness of the facility?".

- Use plain language. Avoid jargon or technical terms like "UX", "OKRs", or "SECR compliance" that might confuse participants unfamiliar with these concepts.

- Steer clear of absolute terms. Words like "always", "never", or "every" can force respondents into binary answers that don't reflect reality. Instead of "Do you always use Product X for cleaning?" ask: "How often do you use Product X for cleaning?".

- Randomise question order. Shuffling questions and answer options helps reduce anchoring bias, where earlier questions influence later responses.

- Provide opt-out options. Choices like "Other", "Not Applicable", or "Prefer not to say" allow respondents to skip questions that don't apply to them, ensuring more reliable data. This is especially crucial when analysing stakeholder feedback to align ESG data with ISSB standards, where accuracy is key.

With your questions refined, the next step is to design response scales that accurately capture participants' views.

Designing Fair Response Scales

Once your questions are clear and neutral, focus on creating response scales that are balanced and easy to understand. Research shows that reliability improves with more scale points, up to seven. Here's how to tailor your scales:

- Unipolar scales (measuring a single quality): Use five points, such as "Not at all satisfied" to "Very satisfied."

- Bipolar scales (measuring opposing qualities): Use seven points, such as "Completely dissatisfied" to "Completely satisfied".

Make sure your scales include an equal number of positive and negative options to avoid skewing results. For example, a balanced five-point scale might look like: "Very Poor, Poor, Neutral, Good, Very Good". As Godfred O Boateng from Northwestern University explains:

Response scales with five points are recommended for unipolar items... Seven response items are recommended for bipolar items.

For sensitive topics where respondents might try to give socially acceptable answers, consider using a forced-choice format. This approach asks participants to rank similarly desirable items, reducing biases like acquiescence or social desirability. Rodrigo Schames Kreitchmann from Universidad Autónoma de Madrid highlights:

The forced-choice format consists of blocks of items with similar social desirability, which respondents must fully or partially rank... it attenuates uniform biases such as ACQ and SDR.

Finally, keep your scales simple and intuitive to prevent "satisficing", where respondents provide quick, surface-level answers instead of thoughtful responses. Document your design decisions thoroughly to provide objective evidence for audits.

Step 4: Review Scoring and Ranking Methods

Take a close look at how you score and rank material topics. Subjectivity can easily slip in through gut feelings or inconsistent criteria, especially if there aren't clearly defined rules in place. By sticking to structured and documented processes, you can minimise bias and improve the reliability of your assessments.

Set Thresholds in Advance

Establish materiality thresholds ahead of time to keep the process objective. These predetermined cut-off points help you decide which topics deserve attention, moving away from informal or last-minute judgements. For example, a common benchmark is a 5% net income threshold, but you can adjust this based on other metrics like profit before tax or total assets.

It's important to set these thresholds early and involve your board or audit committee in the review process to ensure they align with your overall business strategy. Revisit these benchmarks regularly to account for changing trends. This forward-thinking approach prevents any temptation to "move the goalposts" after data is collected and ensures that resources are directed towards genuinely significant issues.

Weighting Stakeholder Inputs

After scoring individual topics, make sure the perspectives of different stakeholders are fairly represented in the final rankings. This step supports the objective standards you've already established. Consider forming a cross-functional materiality working group with members from finance, operations, HR, and procurement. This group can weigh stakeholder inputs and conduct trade-off analyses to ensure rankings align with your strategic goals.

Step 5: Use Independent Validation and Review

Once you've established structured scoring and weighting for your material topics, it's time to bring in independent validation to ensure fairness and accuracy. This step is crucial for identifying any lingering biases that may have slipped through. By involving independent reviewers - whether colleagues outside the core team or external experts - you add an extra layer of scrutiny that enhances the credibility of your final report.

Internal and External Validation

To ensure a balanced review, engage a diverse and cross-functional team of independent validators. This group acts as a safeguard, ensuring no single department's perspective dominates the process. Your audit committee should also play an active role, regularly reviewing and challenging your materiality assessment, much like they would with external auditor materiality thresholds.

A dual approach works best here. A top-down review ensures alignment with board-level strategies, budgets, and risk registers. Meanwhile, a bottom-up review focuses on operational insights, comparing your findings with staff feedback, operational data, and peer benchmarks. Together, these methods provide a comprehensive check. As one non-executive director shared in Financial Reporting Council research:

If the market has a different view than management, it's important to ask – do they have a point? Is it something we haven't seen or that management maybe doesn't want to see?

Documenting dissenting views is a key part of the process. These records substantiate your validation efforts and provide transparency. Establish clear protocols for handling incomplete or conflicting feedback, and cross-check stakeholder responses with publicly available data and historical records to resolve any discrepancies.

Both internal and external reviews are essential for maintaining the accuracy and reliability of your materiality assessment.

Comparing Results Over Time

Your audit trail can be a valuable tool for tracking trends and identifying unexpected shifts. Annual comparisons reveal patterns and help flag anomalies. For example, if climate risk suddenly drops from one of your top three priorities to number ten without a clear rationale, it's worth investigating whether this reflects a genuine strategic shift or an oversight.

Cross-check these changes against internal budgets, risk registers, and external market sentiment to determine their validity. As one investor highlighted in Financial Reporting Council research:

What's material today might change in 12- or 24-months' time. You can't just work off your old materiality assessment, it needs to be current.

Regular trend analysis helps distinguish between legitimate shifts in priorities and inconsistencies caused by outdated methods. For organisations managing multiple clients' sustainability reporting, tools that support ISSB reporting with version control and historical comparison features can simplify this process while maintaining a complete audit trail. These platforms ensure your assessments remain both accurate and up to date.

Using Technology to Reduce Bias

Traditional materiality assessments often fall prey to human error and unconscious bias. By incorporating digital tools into your process, you can reduce subjectivity, maintain consistency, and create a transparent audit trail. Automating data collection and scoring helps minimise the chances of bias influencing critical decisions, enhancing the overall reliability of your results.

Standardising Data Collection with Technology

Digital tools build on established assessment methods by further streamlining and standardising data collection. For instance, platforms that integrate directly with your financial ledger eliminate the need for manual data entry, reducing errors and the risk of selective reporting. When sustainability data is automatically mapped from activity-based transactional records instead of being pieced together from spreadsheets, a major source of bias is removed. Tools like neoeco automatically link ledger entries to recognised emissions categories under GHGP and ISO 14064, ensuring assessments are based on reliable, finance-grade data.

Automated systems also enforce the use of predefined scorecards, ensuring uniform evaluations. By anonymising inputs, they further reduce the influence of individual or group biases. Additionally, these systems allow multiple team members to independently score data, which helps counteract groupthink and promotes a more balanced evaluation .

| Aspect | Manual Process | Automated Process |

|---|---|---|

| Data Quality | High error risk from manual entry and copy-pasting | Reduced errors through automated validation and system integration |

| Audit Readiness | Limited traceability and version control | Full audit trails with version control |

| Scalability | Difficult to manage with increasing data volumes | Scales efficiently with business growth |

| Transparency | Vulnerable to data manipulation and accountability issues | Clear data lineage and controlled access improve transparency |

Real-Time Dashboards for Monitoring

In addition to automating data collection, real-time dashboards offer continuous oversight and monitoring. These dashboards can quickly identify outliers, inconsistencies, and trends. For example, if one stakeholder group's responses suddenly deviate from historical patterns or industry benchmarks, the dashboard can flag this anomaly for review.

Dashboards also enable multidimensional mapping, which goes beyond simple power/interest grids. By incorporating factors like impact, criticality, effort, and position, they provide a more nuanced and balanced view of stakeholder importance. This ensures no single perspective dominates the assessment while offering clear documentation for auditors or external reviewers. For firms managing multiple clients' Scope 3 emissions or sustainability reports, these tools simplify oversight and maintain robust audit trails across all engagements.

Conclusion

Move from relying on gut feeling to a well-organised process by documenting your methods, involving a diverse range of stakeholders, and establishing clear scoring criteria. This shift helps transform subjective opinions into a framework that is both objective and defensible.

Transparency and detailed documentation are crucial safeguards. They help prevent accusations of greenwashing, build trust among stakeholders - even when difficult trade-offs arise - and ensure that complex, high-impact issues with unclear business cases aren't ignored in favour of easier, mutually beneficial options. For instance, the percentage of S&P 500 companies publishing CSR reports jumped from 20% in 2011 to around 90% in 2019, reflecting the increased scrutiny and demand for robust assessments.

This structured methodology also paves the way for using technology to reduce bias. Tools like neoeco streamline data integration, minimise manual errors, and provide audit-ready documentation. These features ensure assessments are both reliable and objective.

Staying up to date with your assessments is equally important. Material issues shift over time due to advances in technology, new scientific findings, and changing public expectations. Regularly reviewing your process, combined with validation through both human expertise and technological tools, ensures your materiality assessments remain accurate and unbiased.

FAQs

How can organisations ensure fair and diverse stakeholder input in materiality assessments?

To ensure that materiality assessments genuinely reflect the perspectives of diverse stakeholders, organisations should begin with thorough stakeholder mapping. This means identifying all groups that your activities impact or who influence your operations. These groups might include investors, employees, customers, suppliers, local communities, regulators, NGOs, and emerging voices like climate-focused investors or diversity advocates. To avoid missing marginalised perspectives, draw on both internal sources (like board meeting minutes and employee surveys) and external sources (such as industry reports and public consultations).

When engaging stakeholders, use accessible and inclusive methods. Options like online surveys, interviews, focus groups, or public consultations can help you reach different audiences effectively. Make sure the formats you choose are suitable for the groups you’re targeting. It’s also crucial to document every step of the process, including any exclusions, to create an audit-ready trail that highlights fairness and transparency.

To keep diversity at the forefront, make stakeholder validation a regular part of your processes. Update your stakeholder registers frequently, conduct annual surveys, and stay aligned with evolving regulations like SECR and UK SRS. Tools like neoeco can simplify data collection, helping to ensure your assessments remain inclusive, precise, and ready for audits over time.

How can technology help reduce bias in materiality assessments?

Technology, especially AI-driven automation, has become a game-changer in making materiality assessments more impartial. By automating the collection, cleaning, and standardisation of vast amounts of financial, environmental, and stakeholder data, it removes the human tendency to cherry-pick data or make subjective decisions. This approach ensures a more balanced and objective evaluation, reducing the chances of missing critical factors or focusing too heavily on familiar themes.

AI tools can sift through thousands of documents to extract ESG-related insights and score topics using consistent criteria. This not only helps counter unconscious bias but also ensures that decisions are grounded in evidence rather than personal opinions. Additionally, platforms that integrate with financial ledgers can categorise transactions into recognised emissions groups, providing precise, finance-grade carbon data that is ready for audits and free from manual errors.

By automating routine tasks, broadening the scope of analysis, and applying consistent data frameworks, technology improves the reliability and transparency of materiality assessments while significantly reducing the influence of human bias.

Why should materiality assessments be updated regularly?

Keeping materiality assessments updated is essential because they identify the most relevant information for stakeholders, including investors. If these assessments remain static, disclosures can quickly become outdated, failing to capture issues that influence a company’s performance or compliance requirements. With reporting standards like ISSB, CSRD, and UK SRS constantly evolving, staying current ensures that disclosures are both accurate and useful for decision-making.

Regular reassessments allow organisations to respond to shifts in regulations, market dynamics, and stakeholder priorities, helping to minimise the risk of non-compliance. Tools like neoeco, which integrate with financial systems, can streamline this process by linking transaction data to recognised emissions categories and delivering real-time updates. This ensures reports are aligned with the latest sustainability frameworks, preserving trust and enabling better-informed decisions.